Of 226 named AI leaders in the Atlas, 71 have moved between labs at least once. That graph has 62 directed transition edges across 29 source labs and 45 destination labs, and the largest single edge (OpenAI to Anthropic, with 8 confirmed crossings) is the visible tip of a structural reorganisation the funding numbers have not yet caught up to. The talent map is the leading indicator for the capital map, eighteen months out.

The Atlas refresh on May 11, 2026 captured the people layer at its current scale: 226 named individuals across 249 labs, with 71 of them holding multi-lab career histories. Building a directed graph from those histories produces 62 distinct lab-to-lab transition edges. The graph is the closest thing in the dataset to a structural ground truth about how the frontier is reorganising, because moving people is a slow and expensive decision and the resulting signal is harder to fake than a funding round or a model release. I have been looking at this dataset for a few weeks and the more I read it the less the public narrative about the frontier maps onto what the graph actually shows. The four labs commonly called "frontier" are not symmetric. The exodus cohort is not a generic startup wave. The China graph is not a gap in the data. The European academic pipeline is not feeding European frontier labs. Each of those is a separable claim and the dataset supports each one on its own terms.

This essay walks through the five structural observations the graph supports, anchors each one to specific named-individual arcs, then argues the larger thesis: that talent flow leads capital flow by roughly eighteen months, and that the current talent map predicts a frontier-lab landscape in mid-2027 that looks meaningfully different from the one priced into late-2025 funding rounds.

The frontier is not symmetric

The "frontier seven" or "frontier four" framing treats OpenAI, Anthropic, Google DeepMind, and Meta AI as peer competitors fighting for the same talent pool. The transition graph treats them as four labs with structurally different roles inside a single system.

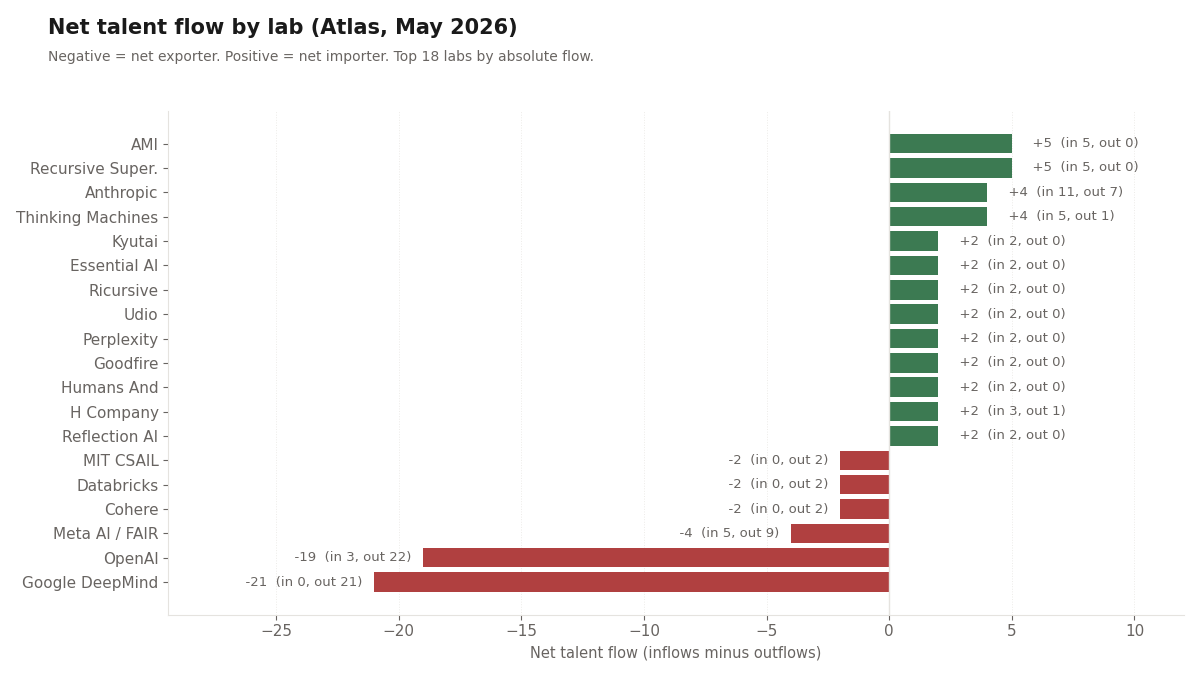

Anthropic is the only net importer of the four. The dataset shows 11 confirmed inflows and 6 confirmed outflows, for a net of +5. The other three are all bleeding people. OpenAI sits at 22 outflows against 3 inflows for a net of −19. Google DeepMind shows 0 inflows in the dataset against 21 outflows for a net of −21. Meta AI shows 5 in and 10 out for a net of −5. Across the four, the combined net flow is −40. Of those 40 net departures, more than half end up at five labs: Anthropic (the +5 frontier importer), Recursive Superintelligence (+5), AMI (+5), Thinking Machines Lab (+4 net), and H Company (+3).

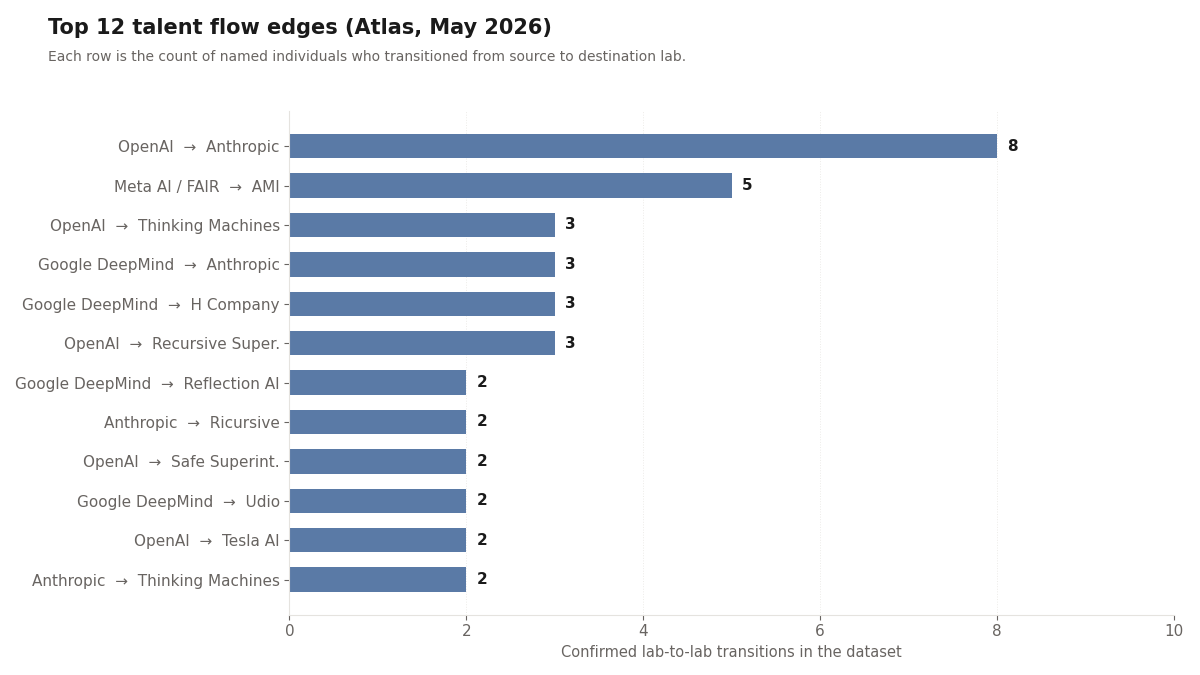

The composition of Anthropic's intake is the second part of the asymmetry. Of its 11 inflows, 8 are from OpenAI and 3 are from Google DeepMind. Zero inflows originate outside another frontier lab. Anthropic's hiring funnel is essentially "recruit from the other frontier labs," and the recruiting is working at a scale that no other lab in the dataset matches. The single OpenAI to Anthropic edge of 8 is the largest edge in the entire graph. By comparison, the second-largest edge (Meta AI to AMI at 5) involves a lab that did not exist in mid-2025.

I want to be careful about the framing here, because "net importer" is doing a lot of work in a small dataset. The graph captures named lab-to-lab moves. It does not capture PhD-pipeline hiring directly, because those show up as initial affiliations rather than transitions. So "Google DeepMind: 0 inflows" means "no other lab in the dataset has lost a tracked individual to DeepMind during this measurement window," not "DeepMind hires nobody." DeepMind hires from academia at scale. What it does not do, in the period the dataset covers, is absorb people from peer frontier labs. The directional asymmetry vs Anthropic is the structural finding.

The implications matter. If the four frontier labs were symmetric peer competitors, you would expect roughly balanced inflows and outflows across all four, with the differences explained by compensation, mission fit, and individual circumstance. The actual pattern is one attractor and three feeders. That is a different kind of system than the one most market commentary describes. It says something specific about the talent value proposition Anthropic has assembled. It also says something specific about the retention problem the other three are facing, and the retention problem is not equally distributed (OpenAI's outflow is concentrated toward Anthropic and toward two or three insurgent labs; DeepMind's outflow is more diffuse; Meta AI's outflow is dominated by AMI). The remedies, if any are coming, would also have to be specific to each lab's pattern.

The composition of Anthropic's 8-from-OpenAI intake is worth naming directly, because the count compresses two distinct events. Seven of the crossings cluster in the 2021 founding cohort: Dario Amodei (then OpenAI's vice president of research), Daniela Amodei (then vice president of safety and policy), Tom Brown (lead author of the GPT-3 paper), Sam McCandlish, Jack Clark, Chris Olah, and Jared Kaplan all departed OpenAI in the same window and incorporated Anthropic as a Public Benefit Corporation. That one founding event accounts for seven of the eight confirmed OpenAI to Anthropic edges. The eighth is John Schulman, the OpenAI co-founder and reinforcement-learning lead, who left OpenAI in August 2024, joined Anthropic later that month, and then moved on to Thinking Machines in November 2024 (a three-month Anthropic tenure that is the shortest senior-researcher arc in the dataset). The graph's only other confirmed Anthropic to Thinking Machines edge is Jared Kaplan's 2024 advisor relationship with the new lab, which the people-layer records as an affiliation while his primary appointment as Anthropic's Co-founder and Chief Science Officer continues. The founding cohort plus the 2024 senior re-routings make Anthropic the structural attractor inside the frontier four, not as a single recruiting event but as a compounding five-year hiring story.

The exodus seeds are clean forks

The new insurgent labs that have absorbed the bulk of frontier-lab outflows are not generic startups. Each one is a clean cohort fork from one or two specific source labs, and the source labs can name the people who left.

AMI, the Paris-based lab anchored by Yann LeCun, is the most concentrated example. All 5 of its confirmed inflows in the dataset come from Meta AI / FAIR. Every individual record at AMI shows a prior Meta affiliation. The lab is, in graph terms, the LeCun-cohort exit from Meta. The five-edge inflow includes LeCun himself, the lab's chief operating officer Laurent Solly, its head of world models Michael Rabbat, its chief research officer Pascale Fung, and its chief strategy officer Saining Xie, all of whom previously held Meta AI affiliations. AMI's $1 billion seed round in March 2026 is the funding-side validation, but the talent-side validation showed up first.

Thinking Machines Lab, Mira Murati's post-OpenAI vehicle, runs a different pattern: a 3-from-OpenAI plus 2-from-Anthropic intake. Murati herself, John Schulman (OpenAI co-founder, who left for Anthropic in 2024 and then for Thinking Machines later that year), and former OpenAI head of safety research Lilian Weng anchor the OpenAI side. The Anthropic side adds research-leadership names that crossed in 2024 and 2025. The pattern is not a single-source cohort fork (like AMI) but a deliberate two-frontier blend. The result is the second-largest intake by a single lab in the dataset, with 5 confirmed inflows and 1 outflow.

Recursive Superintelligence is a 5-inflow lab where 3 inflows come from OpenAI. The OpenAI side includes Jeff Clune (OpenAI's former head of open-endedness research), Josh Tobin, and Tim Shi. The remaining inflows are from research-faculty backgrounds. The lab was founded late 2025 and finalised through early 2026; the talent base assembled in advance of the public launch is the leading edge of the funding round that followed.

Reflection AI is the cleanest Google DeepMind fork in the dataset: 2 inflows, both from DeepMind, both with foundational reinforcement-learning credentials. The visible name is Ioannis Antonoglou, a co-developer of AlphaGo and AlphaZero, who left DeepMind in 2024 to co-found Reflection. Misha Laskin, the second inflow, holds a similar profile. The two-person inflow is small in absolute terms but the talent density per dollar is the highest of any insurgent in the dataset.

H Company, the Paris-based agent-AI insurgent, shows a 3-inflow profile dominated by 2 inflows from Google DeepMind. The remaining inflow is from elsewhere in the European research ecosystem. H Company is part of the small but consequential French insurgent cluster (alongside Mistral AI, Kyutai, and AMI) that has been absorbing European frontier talent rather than letting it flow to the US.

Safe Superintelligence shows 2 confirmed inflows in the dataset, both from OpenAI, with Ilya Sutskever the visible anchor. The lab is the cleanest possible single-arc example: OpenAI co-founder and chief scientist leaves in May 2024, founds Safe Superintelligence in June 2024, and the company raises at frontier-scale valuations on the strength of the founding team's prior affiliations alone. The product surface is still pre-release as of this writing. The capital and the talent both showed up well before any model did.

The common feature across AMI, Thinking Machines, Recursive Superintelligence, Reflection, H Company, and Safe Superintelligence is that none of them is a "generic AI startup." Each one is a cohort-scale migration from a specific source lab, with the source lab able to identify which of its researchers and which of its leadership are now elsewhere. That structure matters for two reasons. First, it makes the talent fork legible: the source labs know which capabilities they have lost and which ones they retained. Second, it makes the insurgent labs more credible as frontier challengers than their founding dates and ages would suggest, because the talent base predates the company. The Atlas's funding-vintages essay traced the same pattern at the capital layer (vintage-2024 and vintage-2025 frontier-insurgent rounds priced at frontier-incumbent multiples); the talent layer was already showing the underlying reorganisation a year earlier.

The hyperscaler retention problem

Google DeepMind and Meta AI are the two frontier labs that sit inside larger hyperscaler parents (Alphabet and Meta, respectively). Both are bleeding people in a way that is structurally different from OpenAI's outflow, and both show patterns that compensation alone cannot fix.

Google DeepMind's 0 confirmed inflows against 21 outflows is the most stark number in the dataset. The lab has lost people to Anthropic (3), Udio (2), Meta AI (2), H Company (2), and Reflection AI (2), plus a long tail of single-person edges to Thinking Machines, Cohere for AI, EvolutionaryScale, and others. None of these are surprising individually. What is surprising in aggregate is that the dataset captures no countervailing inflow during the same window. DeepMind grows by hiring from academia (which the dataset records as initial affiliations rather than transitions) and loses continuously to peers and to insurgents. The lab continues to ship leading research at scale, but the talent base is being thinned at the senior-researcher and research-leadership levels in ways the public outputs do not yet show.

Meta AI's −5 net flow is the more interesting story because Meta's recent capital deployment has been so visible. The lab's 5 inflows include the Scale AI acqui-hire (1 inflow, Alexandr Wang and the senior Scale team) and 4 others. The 10 outflows include 5 to AMI (the LeCun cohort), 2 to Kyutai (the European-research return arc), and individual departures to EvolutionaryScale and other specialised labs. The June 2025 Scale-AI deal at approximately $14 billion in committed capital bought Meta exactly one transition record in the dataset, and the LeCun departure in November 2025 erased that gain and several more besides. The capital-to-talent conversion ratio is poor by any benchmark.

The reason compensation cannot fix this is that the compensation gap has already closed. Frontier-research-tier total comp at Meta and Google now matches or exceeds the cash-plus-equity packages at Anthropic and at most of the insurgent labs. The data point is that researchers at hyperscaler labs continue to leave for insurgent equity and for Anthropic anyway. What the hyperscaler labs cannot offer that the insurgents can: equity in a company that might become a frontier lab, the absence of parent-company strategic alignment overhead, and a mission statement that is not subordinated to a consumer-products business. Those are structural differences, not pay-scale differences, and they are not the kind of thing a recruiting budget can resolve.

Demis Hassabis is the data point worth naming here in the negative. Of all the senior frontier-lab leaders in the dataset, Hassabis is one of the few who has not left his lab. He co-founded DeepMind in 2010, sold it to Google in 2014, took on Google DeepMind leadership in 2023 after the Brain merger, and was running the lab when this dataset closed. He has also held a parallel role as founder and CEO of Isomorphic Labs since November 2021, which is itself an Alphabet subsidiary. The absence of his departure is structurally meaningful. Many of the people who would have plausibly built insurgent labs in 2024 and 2025 had Hassabis at the top of their list of "the leader I would not want to lose." The fact that he stayed is part of why DeepMind has been able to continue shipping research output despite the 21-person outflow underneath him. It is also part of why the insurgent ecosystem has been smaller in scale on the DeepMind side than on the OpenAI side: there is no single high-status DeepMind departure analogous to Sutskever's exit from OpenAI that pulls a cohort with it.

The hyperscaler retention problem will not show up in the public outputs immediately. DeepMind continues to ship state-of-the-art models. Meta AI continues to release Llama generations. But the talent layer leads the model layer by roughly eighteen months, on the same kind of lag that the talent-to-capital arrow runs. The 2027 model cycle is the one that will reveal the cost of the current outflow.

The European subsidy

The transition graph has a structural feature that European policymakers should look at carefully: European academic AI labs are running a one-way talent pipeline into US frontier labs. The reverse flow is essentially zero in the dataset.

The visible exporters are familiar: Inria, EPFL, the Tübingen AI Center, the Max Planck Institute for Intelligent Systems, MIT CSAIL, and Mila. Their PhD graduates and senior researchers show up at Anthropic, Google DeepMind, Meta AI, and the insurgent labs that have emerged from those frontier labs. The dataset records no European academic lab as a destination from a US frontier lab. The pipeline runs in one direction.

The two exceptions are worth naming, because they are the start of a possible counter-trend. Mistral AI (founded April 2023 by Arthur Mensch, ex-Google DeepMind, with Guillaume Lample and Timothée Lacroix, both ex-Meta AI / FAIR) is the largest single European reverse-arc in the dataset. Three frontier-lab departures, three founders, one French national champion. Kyutai (founded November 2023 with funding from Xavier Niel, Rodolphe Saadé, and Eric Schmidt) shows 2 inflows from Meta AI, including Edouard Grave (ex-FAIR researcher, now a senior researcher at Kyutai) and a co-founder. AMI (founded late 2025, public launch March 2026) adds the LeCun cohort fork from Meta. Those three labs are the visible counter-trend.

The scale of the counter-trend is still small relative to the outflow. The dataset shows 5 total inflows from Meta AI to French insurgents (2 to Kyutai, all 5 of AMI's intake is Meta-sourced, with overlap on LeCun who counts once). It shows 3 founders from frontier labs (Mensch from DeepMind, Lample and Lacroix from FAIR) anchoring Mistral. By contrast, the European-academic-to-US-frontier outflow is at least an order of magnitude larger when measured across the full multi-year window the affiliations capture. France 2030's roughly €7 billion ($7.5 billion) AI commitment is doing meaningful work to slow the outflow, but it has not yet reversed the dominant direction.

The strategic question this raises for European policymakers is not the one usually asked. The usual question is "why doesn't Europe have an OpenAI?" The graph-level question is different: "why does French and Swiss and German state capital fund European academic labs whose graduates then take their training to US frontier labs?" The Atlas's sovereign-AI essay covered the capital side of European sovereignty efforts at length. The talent side adds a specific accountability metric: a sovereign-AI program is successful at the talent layer if the PhDs trained inside its boundaries end up working at labs inside its boundaries. By that metric, French sovereign-AI policy is succeeding for the first time in the dataset (Mistral, Kyutai, AMI, H Company all retaining talent), and the rest of Europe is still subsidising US frontier output.

The closed Chinese graph

The transition graph shows essentially zero cross-border movement between Western and Chinese frontier labs in either direction. The Western frontier labs do not receive Chinese frontier-lab alumni. The Chinese frontier labs do not receive Western frontier-lab alumni.

The internal Chinese graph is also smaller than the Western internal graph. Z.AI shows 2 confirmed inflows for a net of +2, which makes it the visible China-side importer in the dataset. DeepSeek, Moonshot AI, MiniMax, StepFun, Alibaba Qwen, Tencent Hunyuan, Baidu Ernie, and ByteDance Seed do not show major lab-to-lab transitions among themselves in the people-extractor refresh that produced this snapshot. The dominant pattern in the Chinese frontier-lab sector is hiring from domestic universities (Tsinghua, Peking, USTC, Fudan, Shanghai Jiao Tong) and from BAAI as a state-anchored research source, with the lab-to-lab transitions among commercial labs running at a lower volume than the equivalent Western graph.

I have to be careful with this claim. The Chinese-side coverage in the Atlas people layer is shallower than the US-side coverage. The dataset captures fewer named individuals at Chinese frontier labs than at Western ones, and the affiliations are less complete. So "essentially zero cross-border movement" is partly an artefact of coverage. The honest reading: the Atlas does not capture cross-border Chinese-Western talent transitions, and to the extent that the underlying graph contains any, the dataset misses them.

That said, the asymmetry vs the Western graph is too large to explain by coverage gaps alone. The single edge OpenAI → Anthropic captures 8 confirmed crossings. The total Western-to-Chinese or Chinese-to-Western edge count is 0. Coverage error explains a difference of one or two; it does not explain a difference of 7-plus to 0. The policy-level US-China decoupling is mirrored at the talent level in a structural way.

The implications for the "China is catching up" framing are specific. The framing is right at the model-output level (DeepSeek V3, Qwen 3, Hunyuan and Doubao all match or approach frontier Western model quality across benchmarks). The framing is wrong at the talent-flow level if it implies that Chinese labs are absorbing Western researchers. Chinese frontier labs are catching up with a separate talent base trained inside the Chinese university and research system, not by importing trained Western researchers. The compute-side asymmetry (China's domestic-fab and domestic-HBM stack, covered in the sovereign-AI essay) is the visible part of this. The talent-side asymmetry is the invisible part, and it has the same architectural meaning: the two ecosystems are operating as parallel rather than competing in a single talent market.

The strategic question this raises for Western policymakers is whether the closed graph is a stable equilibrium or a transient state. If a single Western-to-Chinese or Chinese-to-Western frontier transition occurs in 2026 or 2027 (a plausible scenario at the senior-researcher level given the size of the global Chinese-diaspora research community), the closed-graph thesis weakens. If no such transition occurs through 2027, the closed graph hardens into a structural fact that Western strategy has to plan around.

Specific arcs that explain the system

The numbers above describe the system at the aggregate level. A handful of named individual arcs explain how the system actually works.

Ilya Sutskever is the cleanest exodus archetype. He co-founded OpenAI in December 2015 as chief scientist, served in that role for eight and a half years, and left in May 2024 after the November 2023 board crisis and its aftermath. One month later, in June 2024, he co-founded Safe Superintelligence with Daniel Gross and Daniel Levy. The lab raised at multi-billion-dollar valuations within months, with no public product and no public model. The deal was visibly on the strength of Sutskever's track record alone. He took 1 additional confirmed OpenAI researcher with him in the early phase (the second leg of the OpenAI → Safe Superintelligence edge in the graph). The Sutskever arc is the archetype because it shows the talent-to-capital lag at its shortest: months, not years, from a verified high-status departure to a frontier-scale funding round priced on its strength.

Yann LeCun is the clean cohort-transfer archetype. He joined Meta AI / FAIR in 2013 as vice president and chief AI scientist, ran the lab for twelve years, and departed in November 2025 after the June 2025 restructuring that placed him under Alexandr Wang (the Scale AI founder who joined Meta as chief AI officer in the acqui-hire). The AMI Labs founding in late 2025, with public launch in March 2026, pulled the five-person Meta cohort that the transition graph captures. AMI is the lab where the talent-to-capital lag is essentially zero: the $1 billion seed round and the cohort migration happened concurrently. The arc shows that when a senior frontier-lab leader departs, the cohort that follows is often pre-recruited inside the source lab, and the resulting capital is available immediately.

Mira Murati is the executive-exodus archetype. She joined OpenAI in 2018, became chief technology officer, served as interim CEO during the November 2023 board crisis (a five-day tenure that ended when Sam Altman was reinstated), and departed in September 2024. Thinking Machines Lab was founded quietly in 2024 and launched publicly in February 2025. The lab assembled its team across the second half of 2024 and the first half of 2025, including John Schulman (OpenAI co-founder, who had moved to Anthropic in 2024 before joining Thinking Machines later that year) and former OpenAI head of safety research Lilian Weng. The lab's funding round in 2025 priced it at multi-billion-dollar valuations on the strength of its founding team alone. The Murati arc is structurally similar to the Sutskever arc but with a wider talent draw across both OpenAI and Anthropic, and with a closer-to-product positioning than Safe Superintelligence.

Dario Amodei and Daniela Amodei are the prototype that the others are following. Both held vice-president-level roles at OpenAI: Dario as vice president of research, Daniela as vice president of safety and policy. Both left in 2021. They co-founded Anthropic the same year, with a founding team that pulled approximately a half-dozen senior OpenAI researchers with them. The Anthropic founding is the original 2021 case of the cohort-exit pattern, and the company's intake profile since (11 inflows, 7 from OpenAI, 3 from Google DeepMind, 1 from elsewhere) is essentially a five-year continuation of the same dynamic. The Amodei arc is also the case that proves the talent-leads-capital thesis at scale: Anthropic in 2021 was a small startup; Anthropic in 2026 is the only net-importer frontier lab in the system and the structural attractor for the OpenAI and DeepMind diasporas. The compounding of the talent base over five years produced the funding outcome of 2024 and 2025.

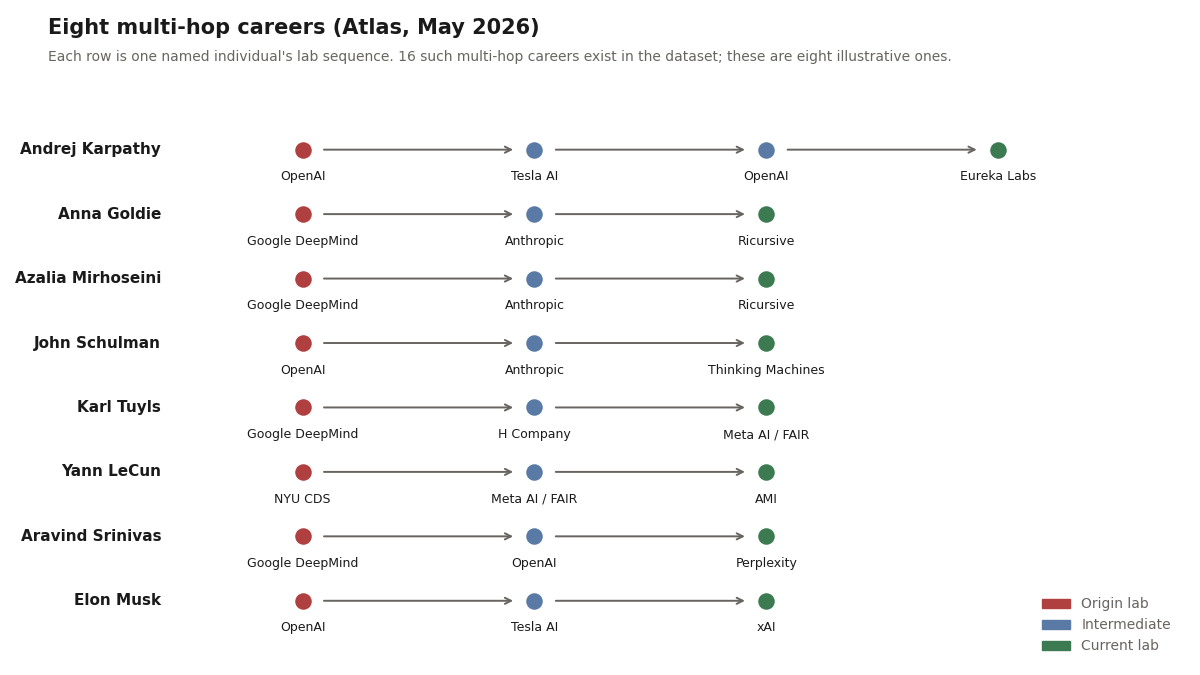

Andrej Karpathy is the unusual arc that doesn't fit the dominant pattern. He was a founding member of OpenAI in 2015, left for Tesla in June 2017 as director of AI and Autopilot Vision, ran Tesla AI for five years, returned to OpenAI in 2023 as a senior research scientist, and left in 2024 to found Eureka Labs, an AI-education startup. The graph captures Karpathy as part of the OpenAI → Tesla edge (which carries 2 transitions) and part of the Tesla → OpenAI return-arc (a smaller edge). His current lab is Eureka Labs, which does not have a frontier-scale funding round and is positioned as an education-products company rather than a frontier challenger. The Karpathy arc matters because it is the visible counterexample to the cohort-exodus pattern: not every high-status OpenAI departure produces an insurgent frontier lab. Some produce specialised companies in adjacent markets. The exodus cohort is not monolithic.

The arcs above all involve frontier-lab departures. The reverse direction is sparse in the dataset but worth naming. The Scale AI to Meta AI edge in mid-2025 (the Alexandr Wang and senior-Scale-team move) is the largest non-frontier-to-frontier inflow into a hyperscaler lab in the dataset. The transaction valuation (approximately $14 billion in committed Meta capital to Scale AI leadership) is the visible cost of one transition record. By the time the deal closed, the LeCun departure in November 2025 had already begun, and AMI's funding closed by March 2026. The capital flowed one direction; the talent went a different direction.

The double-hop crowd

The 71 multi-lab career histories in the dataset are not evenly distributed across two-stop and longer paths. Sixteen of those 71 careers have three or more distinct lab stops, and that subset is where the leading-indicator signal compresses tightest. A senior researcher who has moved twice within a 24-month window is more informative about where the frontier is heading than ten researchers who have moved once each, because the second move is filtered through the first lab's hindsight.

John Schulman is the most compressed three-stop arc in the graph. The OpenAI co-founder and reinforcement-learning lead departed OpenAI in August 2024, joined Anthropic that same month as a senior research leader, then left Anthropic in November 2024 to join Thinking Machines as chief scientist. Three frontier labs in three months. The Anthropic tenure is the shortest senior-researcher affiliation captured in the dataset, and the timing matters: Schulman's departure from Anthropic predated Mira Murati's public Thinking Machines launch in February 2025 by three months, which means his second move was pre-arranged inside the company before its public existence. The "join Anthropic, then leave for a non-frontier insurgent" pattern is not unique to him.

Anna Goldie and Azalia Mirhoseini are the cleanest pair-move arc. Both were senior staff scientists at Google DeepMind on the chip-design and AutoML side. Both joined Anthropic together. Both then departed Anthropic together for Ricursive in 2025. The Ricursive founding event is therefore a two-edge cohort fork from Anthropic, paralleling the Schulman-and-Kaplan pattern of senior researchers leaving Anthropic for second-generation insurgent labs. The dataset's two Anthropic-to-Ricursive edges plus the two Anthropic-to-Thinking-Machines edges plus the one Anthropic-to-Goodfire advisor edge total five Anthropic-side outflows tracked in the current snapshot, which is the data point behind Anthropic's outflow count of seven (compared to its eleven inflows, giving the +4 net). Anthropic is still a net importer, but the second-derivative signal (Anthropic itself starting to seed insurgent labs) is now visible.

Andrej Karpathy is the boomerang arc. OpenAI founding member in 2015. Director of AI and Autopilot Vision at Tesla from June 2017 to mid-2022. Back to OpenAI in February 2023 as a senior research scientist. Out again in February 2024 to found Eureka Labs, an AI-education startup positioned outside the frontier challenger pattern. The Karpathy path is the dataset's only return-to-OpenAI arc and the most visible counterexample to the cohort-exodus narrative: not every high-status OpenAI departure aims at frontier displacement. Some aim at adjacent markets where the researcher's reputation translates into a different kind of moat.

Karl Tuyls is the back-to-hyperscaler counter-arc. Senior research scientist at Google DeepMind through May 2024. Co-founder of H Company from May 2024 through late 2024 (a seven-month stint that ended before H Company's largest funding round). Director of Meta AI's Paris research lab from late 2024 to present. The arc is the only confirmed re-entry into a hyperscaler lab among the multi-hop set, and it's the data point that complicates the otherwise-clean "frontier-to-insurgent is one-way" narrative. The H Company tenure being short suggests the talent-to-funding lag goes both ways in edge cases: a senior researcher can sample an insurgent and return to a frontier lab if the insurgent's trajectory underperforms expectations. The Tuyls case is one record, not a pattern, but it raises the question of how many of the post-2024 insurgent founding-team members will still be at those labs by the 2027 refresh.

Aravind Srinivas is the longest hop in the dataset by lab type. He moved from Google DeepMind through OpenAI to a consumer-search startup (Perplexity) where he serves as chief executive. The arc is one of two confirmed paths from a frontier lab to a non-frontier consumer product company (the other being Karpathy to Eureka Labs). The implication is that the senior-researcher career graph branches at the founder decision point: some founders aim at frontier challenger status with frontier-scale talent compression (the AMI / Thinking Machines / Recursive Superintelligence track), some aim at adjacent consumer products where frontier-lab tenure is a credibility moat rather than a capability requirement (the Perplexity / Eureka Labs track). The split shows that "frontier-lab departure" is not a single signal; the destination distinguishes between two different bets on where capital will flow over the next eighteen months.

Together the multi-hop set contains the highest-velocity signal in the dataset. Each three-stop arc represents two separate independent decisions to leave, which is structurally harder to fake or to walk back than a single transition. When several of these arcs cluster in time and source lab (the OpenAI to Anthropic to second-generation insurgent pattern, four cases in 2024-25), the signal compresses into a near-real-time read on the senior-researcher market.

Why the talent leads the capital

The eighteen-month-lead thesis is straightforward: senior researchers and senior research leaders decide to leave their labs before the new labs they join have published models, raised institutional rounds, or shown public revenue. Their departure is a leading indicator of where institutional capital will price the frontier eighteen months out, because the institutional capital is following the talent base.

The Atlas's funding-vintages essay documented the 2024-to-2025 vintage shift in funding: vintage-2024 and vintage-2025 frontier-insurgent rounds (Safe Superintelligence, Thinking Machines, AMI, Recursive Superintelligence, Reflection AI, H Company) priced at multiples normally reserved for frontier-incumbent rounds, on the strength of founding-team affiliations rather than product traction or revenue. That was the visible market response. The corresponding talent-side signal had already shown up: Sutskever left OpenAI in May 2024; Murati left in September 2024; LeCun left Meta in November 2025; the OpenAI to Anthropic edge had reached its current count of 8 by mid-2025. The capital priced the move only after the talent had already crossed.

The mechanism is not subtle. Limited partners and institutional allocators in 2024 and 2025 noticed that the people who would credibly build the next frontier-scale lab were leaving the existing frontier labs in named, public ways. The signal was loud enough that fund managers who priced the founding teams at frontier-scale valuations were taking less talent risk than the dollar figures implied. The capital was chasing a talent base that already existed. By the time the rounds closed and the press cycle ran, the migration had been visible to anyone reading the org-chart updates for a year.

The reason this matters for the next eighteen months is that the current talent map is showing several patterns that the capital has not yet priced in. Anthropic's 11-inflow position and its 2-to-1 ratio of inflows to outflows are not fully priced into the company's most-recent valuation. The hyperscaler retention problem (DeepMind's 0 inflows, Meta AI's −5 net) is not visible in either Alphabet's or Meta's market capitalization in any explicit way. The European insurgent cluster (Mistral, Kyutai, AMI, H Company) has talent density that the round sizes do not yet match. The closed Chinese graph is priced into China-specific AI valuations to some extent (the gap between US-listed Chinese AI ADRs and the equivalent US-listed AI names reflects part of the geopolitical risk premium) but not in a way that distinguishes between "China cannot catch up" and "China is catching up with a separate talent base," which are very different investment theses.

If the eighteen-month-lead pattern holds, the 2027 capital cycle will reorganise around what the 2026 talent map is showing right now. The strongest signal is the Anthropic asymmetry. The second-strongest signal is the cohort-fork credibility of the AMI, Thinking Machines, and Recursive Superintelligence trio. The third-strongest signal is the hyperscaler-lab retention thinning. Each one is a specific bet, and each one is data-anchored at the level of named-individual moves rather than rumour.

The consequences

The transition graph is a present-tense description. The question that matters for the next eighteen months is what happens because of it. Three distinct consequences follow from the same set of moves, and they cut in different directions for different actors.

Setbacks for the source labs

The structural cost of a senior departure is not replaceable by a hire from academia, because the person leaving carries 3-7 years of accumulated frontier-lab methodology that does not transfer in a job interview. Tacit knowledge of training stability tricks, scaling-law intuitions, eval design quirks, infrastructure shortcuts, what failed and got abandoned, how the team disagreed on direction. None of that shows up in a CV or a published paper, and most of it cannot be efficiently transmitted to the next senior hire.

OpenAI lost a near-complete rotation of its senior research leadership across 2024 and into 2025. Ilya Sutskever (chief scientist, May 2024). Mira Murati (chief technology officer, September 2024). John Schulman (reinforcement-learning lead, August 2024). Andrej Karpathy (founding member back from Tesla, February 2024). Tom Brown (GPT-3 lead author, 2021 to Anthropic and counted in this dataset's openai-to-anthropic edge). Plus Jan Leike (superalignment co-lead, May 2024 to Anthropic, on the public record but not yet in this snapshot's people layer). The company continued to ship at the model layer (GPT-5 in 2025, GPT-5.5 in April 2026), but the institutional memory of how to build a frontier model now lives at Anthropic and at Thinking Machines and at Safe Superintelligence as much as it does at OpenAI itself. The cost is not visible in current revenue. It is visible in the question of where the next generation of frontier capability is being designed, and the answer to that question is "in multiple places now, not one."

Google DeepMind's 21 outflows are the second-largest setback in the dataset, even though the lab's research output has continued at scale. The departures concentrate on the reinforcement-learning leadership stack: Ioannis Antonoglou (AlphaGo and AlphaZero co-developer) and Misha Laskin to Reflection AI. Karl Tuyls, Laurent Sifre, and Daan Wierstra (DQN co-author) to H Company. Anna Goldie, Azalia Mirhoseini, and Behnam Neyshabur to the Anthropic-and-then-elsewhere pipeline. Gemini 3 and Gemini 3.1 shipped on schedule, but the people who would have been running specific 2026-27 research bets are now running them at competitor labs. The lab continues to hire deeply from academia; the gap is at the senior-research-leadership tier where the academic hiring pipeline doesn't reach.

Meta AI's setback is the smallest in headcount terms (-4 net) but the most visible in capital-deployment terms. The June 2025 Scale AI acqui-hire cost ~$14 billion in committed Meta capital, brought Alexandr Wang and the senior Scale team in (1 transition record), and was followed in November 2025 by the Yann LeCun cohort departure that erased that gain at the senior-researcher level several times over. The capital-to-talent conversion ratio on the Scale deal was the worst in the dataset by any measure that counts in this context. Llama 4 shipped in 2025, but the lab that LeCun ran in Paris is now AMI, not Meta, and the European frontier-AI ecosystem reorganised around the move.

A levelled playing field for insurgents

The same departures look different from the receiving side. The talent-to-capital lag is now effectively zero for a small number of insurgent labs, because the talent base assembles in advance of the public launch and the funding round prices on that base rather than on product traction.

Safe Superintelligence raised at multi-billion-dollar valuations in 2024, with a follow-on at approximately $30 billion in 2025, on Sutskever's prior OpenAI tenure alone, with no public model. Thinking Machines Lab raised at multi-billion-dollar valuations in 2025 on Murati's, Schulman's, and Lilian Weng's combined frontier-lab tenure, with no public model. AMI raised a $1 billion seed in March 2026 on LeCun's, Laurent Solly's, Pascale Fung's, Michael Rabbat's, and Saining Xie's combined Meta AI tenure, with no public model. Recursive Superintelligence raised at frontier-scale valuations on Jeff Clune's, Josh Tobin's, and Tim Shi's combined OpenAI Open-Endedness Team tenure, with no public model. Reflection AI raised at frontier-scale valuations on Antonoglou's and Laskin's combined DeepMind reinforcement-learning tenure, with no public model.

The pattern is consistent enough to be a structural feature, not a series of accidents. The insurgent talent base IS the product premise. Investors are paying for the optionality of frontier capability landing inside a non-frontier company, with the bet being that the talent was the constraining factor at the source lab rather than the compute or the brand. If the bet is right, several of these labs ship frontier-grade models in 2026 and 2027 and the frontier four becomes a frontier ten or a frontier twelve. If the bet is wrong, the insurgent valuations re-rate down hard and the talent migrates again, which would itself be visible in the next refresh of this graph.

What this means for incumbents is that the cost of losing a senior researcher is no longer "we have to hire a replacement at academic-tier compensation." The cost is "a competitor that didn't exist 18 months ago now has a credible frontier-capability premise and several billion dollars of patience to develop it." Compensation cannot close that gap because the compensation gap was never the constraint. The constraint is the perceived option value of frontier-lab tenure as an asset class, and the option-value pricing is set by the insurgent funding rounds, not by the incumbent comp committees.

The leveling is also asymmetric across geographies. The European insurgent cluster (AMI, Mistral, Kyutai, H Company) benefits from a continent-scale talent base that the US frontier labs had been absorbing for a decade and that the European academic system kept producing through that absorption. The Chinese insurgents (DeepSeek, Moonshot, MiniMax) benefit from a separate domestic talent pipeline that the closed graph protects. Both clusters have a credible frontier-challenger position in 2026 that they didn't have in 2024, which is the leveling-at-scale outcome of the migration.

Exfiltration vs diffusion

The more uncomfortable framing of the same data is that the frontier labs are continuously exfiltrating each other's research methodology through the senior-researcher career graph, and that no legal regime currently in place can stop them. California's Business and Professions Code §16600 makes employee non-competes unenforceable. The 2024 expansion (AB-1076) further constrained the few residual non-compete-adjacent structures (training-cost recovery clauses, garden-leave provisions, choice-of-law shopping). A senior OpenAI researcher who joins Anthropic in 2024 carries roughly 3 years of OpenAI's training-stack methodology with them and cannot be legally prevented from using it at the new employer.

The 8 OpenAI to Anthropic crossings each carry that load at the high end: senior researchers with detailed working knowledge of GPT-series training stability, RLHF and RLAIF recipe choices, eval-suite design, scaling-law intuitions, and the specific failure modes the source lab had to engineer around. None of that is patentable. None of it is contained in a model-weights trade secret. All of it transfers in the researcher's head, and all of it shows up in the next lab's published model within 12 to 18 months.

The DeepMind to Anthropic crossings (Anna Goldie, Azalia Mirhoseini, Behnam Neyshabur) carry a similar load on the AutoML, scaling-law, and Gemini-team-specific methodology side. The Meta AI to Kyutai crossings (Hervé Jégou, Édouard Grave) carry the FAIR Paris speech and multilingual methodology to the French insurgent. The Cohere to Cohere for AI split (Sara Hooker, Nick Frosst) splits the original Cohere training and research stack across the commercial parent and the open-research nonprofit, which is a deliberate diffusion event rather than an unintended one.

The reason the industry treats this as feature rather than bug is structural, and it cuts two ways. The optimistic reading: frontier-to-frontier diffusion raises the floor of accessible methodology for the entire field, which accelerates the rate at which new entrants can compete. The shared baseline narrows the gap between frontier and near-frontier, which historically has produced more research output and faster iteration. The pessimistic reading: the labs that invest the most in fundamental research subsidise the labs that hire from them, and the incentive to do fundamental research at the frontier diminishes as the methodology gets exfiltrated faster than the source lab can monetize it. Both readings are partly correct. The historical pattern of AI research (open papers, shared techniques, fast publication cycles) tilts the balance toward feature, but the post-2022 commercialisation of frontier models is testing the assumption that the open-research norm survives at the senior-researcher level.

What the dataset does NOT capture, and what is worth flagging, is the rate at which actual trade secrets (training datasets, model weights, infrastructure-specific tuning, customer pipelines) cross between labs. The senior-researcher career graph diffuses methodology and intuition. It does not, in any case the dataset records, transfer model weights or proprietary training data. The boundary between "the researcher's general knowledge" and "the company's trade secret" is fuzzy in practice but firm in the cases that have actually gone to litigation. The frontier labs have so far chosen not to litigate on the senior-researcher movement question itself. That choice is itself a data point: it suggests the labs have collectively decided that attempting to enforce methodology-as-trade-secret would cost more than accepting diffusion.

Whether that calculation holds through 2027 is the open question. If Anthropic ships a Claude release in 2027 that is visibly built on training methodology Anthropic engineers acquired at OpenAI, the legal posture could change. If it doesn't, the senior-researcher market continues to function as a knowledge-diffusion engine, which is exactly the substrate this essay's leading-indicator thesis depends on. The exfiltration framing and the diffusion framing describe the same data; which one is the right framing depends on whose seat you are sitting in.

What to watch

Six concrete signals over the next twelve months are worth tracking specifically.

The first is the next OpenAI departure cohort. The dataset shows OpenAI at 22 confirmed outflows, the second-largest source in the system. The composition of the next 5 to 10 OpenAI departures (names, destinations, seniority) will reveal whether the outflow is plateauing or accelerating. Plateau looks like outflows decelerating below 5 per year and dominated by junior-to-mid researchers. Acceleration looks like outflows above 10 per year and dominated by senior research leaders going to a small number of named insurgent labs.

The second is whether Anthropic continues to absorb at greater than 2-to-1 against its losses. The current 11-to-6 ratio is the structural feature that defines the asymmetry inside the frontier four. If the ratio narrows toward 1-to-1 over the next year, the asymmetry weakens and the frontier-four-as-peers framing becomes more accurate. If the ratio stays above 2-to-1 or widens, Anthropic continues to differentiate.

The third is whether any Western-to-Chinese or Chinese-to-Western frontier-lab transition occurs in 2026. A single confirmed crossing would weaken the closed-graph thesis materially. The absence of any crossing through the year hardens the thesis into a multi-year structural fact. Either direction is informative.

The fourth is whether the hyperscaler-lab parents announce retention initiatives that show up in the transition data. Alphabet and Meta have both signalled internal AI-research reorganisation efforts in late 2025 and early 2026. The data-level test is whether the outflow from Google DeepMind and Meta AI decelerates in the 2026 data refresh, not whether the announcements emphasise retention as a priority. Announcements are cheap; the transition count is not.

The fifth is whether the exodus cohort produces a credible model release. AMI, Thinking Machines, Recursive Superintelligence, Reflection AI, H Company, and Safe Superintelligence have all assembled frontier-scale talent and frontier-scale funding. None of them has shipped a frontier-grade public model release yet. The first credible release from any one of them validates the talent-leads-capital thesis at the output layer, not just the input layer. Repeated failures to release would weaken it.

The sixth is whether one of the European academic labs (Inria, EPFL, Tübingen, Max Planck, Mila) receives sovereign-AI funding sufficient to retain its PhD output domestically. France 2030's allocation is the largest current commitment but is still primarily flowing to commercial labs rather than to academic-research retention. A direct German, Swiss, or EU-level commitment to academic-lab retention at a scale that visibly reduces the academic-to-US-frontier outflow would invalidate the European-subsidy thesis. Absent such a commitment, the subsidy continues.

The honest summary

The Atlas dataset as of May 11, 2026 captures 226 named AI leaders, of whom 71 have moved between labs at least once, producing 62 directed transition edges across 29 source labs and 45 destination labs. The graph shows five structural patterns that are not symmetric, are not in equilibrium, and are not visible in the funding numbers at the same resolution.

The frontier four are not symmetric peers. Anthropic is a net importer (+5); OpenAI (−19), Google DeepMind (−21), and Meta AI (−5) are net exporters. The asymmetry is large enough to redefine the framing.

The exodus cohort is not a generic startup wave. AMI, Thinking Machines, Recursive Superintelligence, Reflection AI, H Company, and Safe Superintelligence are clean cohort forks of talent from specific source labs, and the talent base predates the company in each case. The capital is chasing a talent fact that already exists.

The hyperscaler-lab retention problem is structural. Google DeepMind shows 0 inflows in the dataset. Meta AI's −5 net flow is the visible cost of the LeCun cohort departure, and the Scale AI acqui-hire bought one transition record at a $14 billion price tag. Compensation cannot fix the gap because the gap is not compensation.

The European academic system is subsidising US frontier capacity. Inria, EPFL, Tübingen, Max Planck, MIT CSAIL, and Mila all show one-way outflow to US frontier labs in the dataset. Mistral, Kyutai, AMI, and H Company are the start of a counter-trend, but the counter-trend is still small relative to the dominant flow.

The China graph is closed. Western and Chinese frontier labs do not exchange talent in either direction in the dataset, and the asymmetry vs the Western internal graph is too large to attribute to coverage gaps. The implication for the "China is catching up" framing is that the catch-up is occurring with a separate talent base, not by importing Western researchers. The talent-side US-China decoupling mirrors the capital-side decoupling, with the same architectural meaning.

Above all of this sits the timing claim. The 2024 and 2025 talent map already showed the reorganisation that the 2025 and 2026 funding map priced in. The 2026 talent map is showing patterns that the 2027 funding map has not yet priced. The Anthropic asymmetry, the cohort-fork credibility of the exodus insurgents, and the hyperscaler-lab retention thinning are the three strongest signals to watch.

The dataset is the present-tense picture. The next refresh in late July will show whether the patterns in this essay are accelerating, plateauing, or reorganising. The transition graph is the spine. The shape of the next two years of frontier-lab capital allocation is the open question.

Sources used in this piece:

- The Atlas dataset (people.json, labs.json, transitions.json) extracted 2026-05-11, covering 226 named individuals across 249 labs and 71 multi-lab career histories.

- The 62 directed transition edges captured in the affiliations layer of the people extractor.

- Anthropic's company history and founding-team affiliations, drawn from the Anthropic profile and primary press accounts of the 2021 OpenAI departures.

- Safe Superintelligence's June 2024 founding announcement and subsequent funding-round coverage; Ilya Sutskever Wikipedia entry for chronology cross-check.

- Thinking Machines Lab's February 2025 public launch announcement and team-composition coverage; Mira Murati Wikipedia entry for the OpenAI tenure timeline.

- AMI Labs March 2026 public launch announcement, and Yann LeCun Wikipedia entry for the Meta AI tenure and November 2025 departure.

- Reflection AI March 2025 public launch coverage, and Ioannis Antonoglou Wikipedia entry for the AlphaGo and AlphaZero credentials.

- Recursive Superintelligence late-2025 founding coverage, and the Jeff Clune Wikipedia entry for the OpenAI Open-Endedness Team chronology.

- Mistral AI's April 2023 founding press release, and Arthur Mensch profile for the DeepMind departure timeline.

- Kyutai's November 2023 founding announcement, and the public coverage of its initial funding by Xavier Niel, Rodolphe Saadé, and Eric Schmidt.

- Public coverage of the June 2025 Meta restructuring under Alexandr Wang, the Scale AI acqui-hire pricing, and the subsequent November 2025 LeCun departure.

- Public coverage of the November 2023 OpenAI board crisis, Mira Murati's five-day interim CEO tenure, and the September 2024 Murati departure.

- Andrej Karpathy profile for the OpenAI / Tesla / OpenAI / Eureka Labs chronology.

- Companion essays: Sovereign AI: $608 billion, four playbooks, and the Chinese state stack for the European-funding and Chinese-state context; Vintages: how AI's funding cycle decoupled from reality for the capital-side documentation of the 2024-to-2025 reorganisation.

Last updated: May 12, 2026. The Atlas people-layer refresh on May 11, 2026 was partially affected by an intra-extraction event mid-day; 22 of the 71 multi-lab transition records remain at extraction-confidence "medium" pending the next refresh. For named-individual arcs quoted in this essay, only human-verified records (73 of 226) were used for specific dates and roles. Aggregate counts are reported at the full dataset level. Send corrections.